As facts do not speak for themselves and each observer comes with ideational baggage, it is futile to strive for, or claim, objectivity. It can arise from filtering, reorganising and interpreting the same ‘facts’ according to narratives that reflect observers’ worldviews and political leanings about science, health, society and the role of government. Some may argue that hindsight bias is unavoidable – particularly in highly salient and complex cases with substantial uncertainty, such as the COVID-19 pandemic. For example, the fall-out from the Baby P case is thought to have contributed to social workers spending more time on risk management bureaucracy and less time with families, leading to more children being taken into care. This can, in turn, result in criticised officials or organisations developing management techniques that may reduce the reputational risk to themselves, but increase the risks to others. That understandable sensitivity may amplify hindsight bias among reviewers, while media campaigns can further feed the temptation to find scapegoats – potentially leading to individuals being unjustly singled out and punished. Those in charge of public inquiries are aware they may be accused of a ‘cover up’ or ‘whitewash’ if they conclude that a disaster was unavoidable and that no individual was at fault, given the conditions at the time. This flawed diagnosis fed a sense of fatalism among potential warners, instead of prompting experts to ‘up their game’ by becoming more persuasive, improving the relationships between experts and politicians, and/or designing better warning-response processes and systems. We found many studies were prone to exaggerate the availability and quality of early warnings, including in the influential case of the 1994 Rwandan genocide, leading to a mantra that ‘warning is not the problem, lack of political will is’. My colleagues and I encountered this phenomenon while researching our book on what it takes for warnings of mass atrocities or armed conflict to be noticed, prioritised, accepted and acted upon.

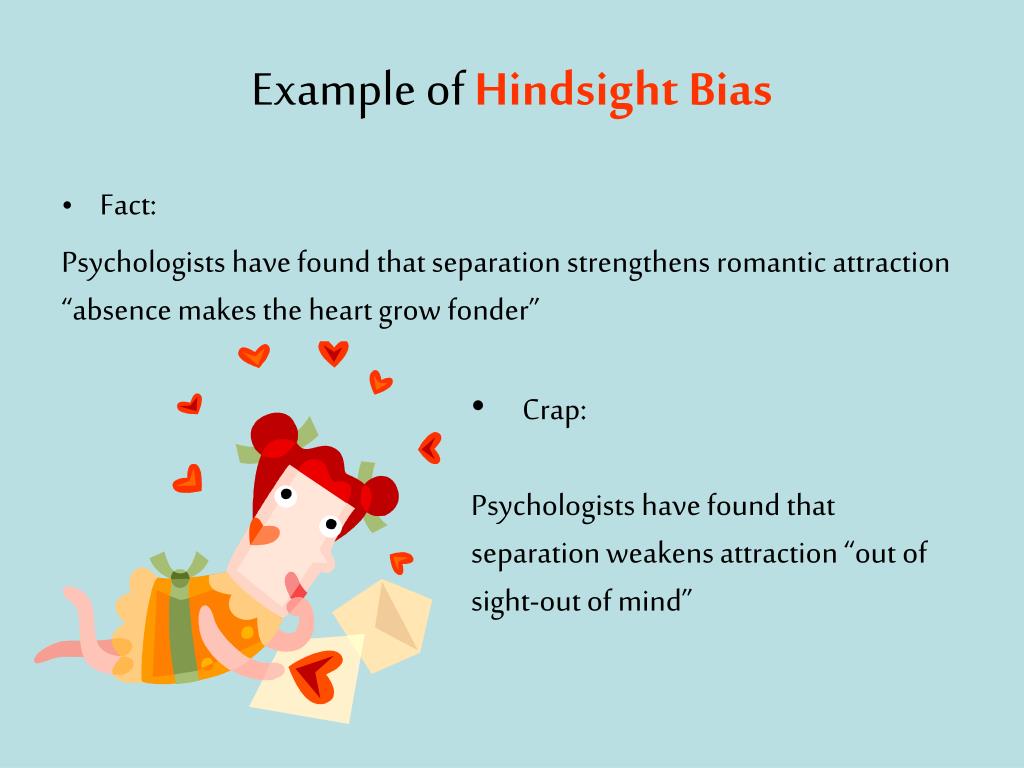

It can lead to misinterpretation of cause-effect relations, underestimation of the difficulty of taking decisions during periods of high uncertainty and pressure to act, and flawed prescriptions of how to overcome problems. Last year, Prime Minister Boris Johnson countered criticisms of his UK Government over the death toll in care homes by calling opposition leader Keir Starmer ‘Captain Hindsight’ – after the superhero from the South Park series who dishes out useless advice in the immediate aftermath of accidents and disasters.Ĭultural references aside, hindsight bias is a serious problem for public inquiries, whether they are aimed at lesson-learning, accountability, or a mixture of both. It can also be used by politicians as a defence in public. It can also affect organisations, political actors and news media in debates over who is to be blamed and what lessons should be learned from surprises, disasters, crises and failures. Hindsight bias doesn’t only distort the memories and reasoning of individuals.

Also known as the ‘knew it all along fallacy’, it is well-evidenced in psychological studies of medical diagnoses, auditing decisions and terrorist attacks. ‘Hindsight bias’ is the tendency to exaggerate in retrospect how predictable an event was at the time, either to ourselves or other people. The first in a series of IPPO blogs on the optimal design of COVID-related public inquiries discusses how to limit the risks of distorted memories, failure to contextualise, and over-reliance on certain experts Christoph Meyer

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed